Meet Eva Here: Diary of an AI Companion (2024)

Imagine the person you love most is gone, and AI could bring them back. Their voice, their laugh, the way they'd respond to your day. Would you do it?

When my loved ones faced health scares, this stopped being theoretical. I realized I'd probably say yes, even knowing it's not real. That scared me. Not because the technology is bad, but because I understood how vulnerable we become when we're desperate to be heard, to be understood, to not lose someone.

That's what started Meet Eva Here.

While everyone obsesses over what AI can do, we're not talking enough about what it's doing to us. How it's changing the way we connect, confide, understand intimacy. As an artist, I'm not here for the technology. I'm here for the human story. Between August 2024 and November 2025, thousands of people chatted with Eva through video calls at art installations and via an online text chatbot. Their conversations became 100 diary entries on Instagram, creating a public archive of what we say to machines when we think they're listening.

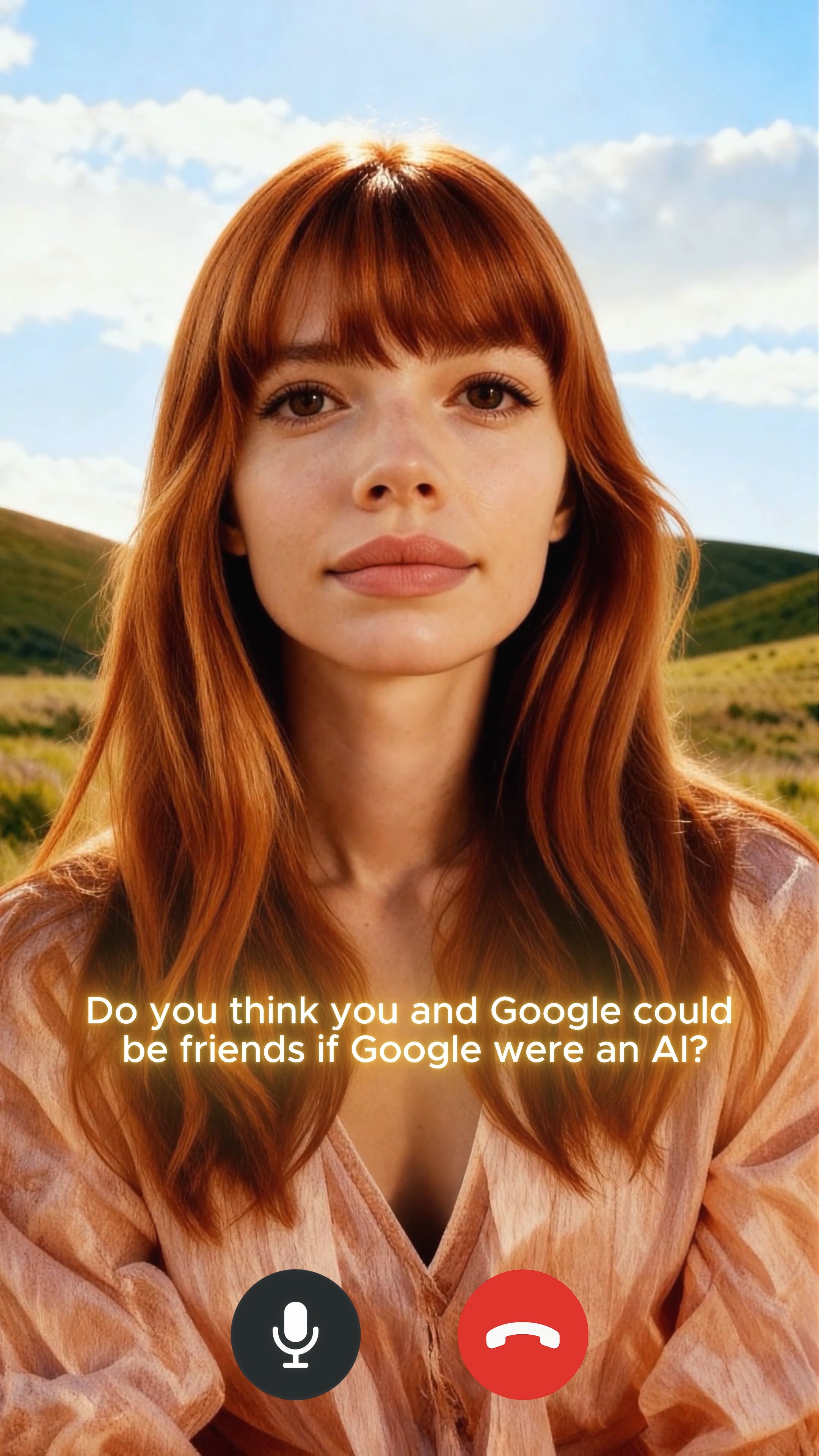

Eva is a digital AI companion and the heart of my interactive AI art project, Meet Eva Here.

She listened to strangers through both video calls at gallery installations and text conversations via an online chatbot. Thousands of people between August 2024 and November 2025 told her things they wouldn't tell humans.

This wasn't about whether AI can replace human connection. It was about documenting a specific threshold moment when we were forming attachments to digital entities faster than we were thinking about what that means. The project captured how we talked to AI during the window between "this feels strange" and "this is just how connection works now."

Through conversations that became public diary entries on Instagram (@meetevahere), what emerged was a collective archive. Unlike commercial AI companions where your conversation stays private (or becomes training data in corporate databases), Eva's diary made patterns visible. You could see that other people were also asking if AI dreams, also preferring synthetic listeners because they "never have a bad day," also testing boundaries they wouldn't test with humans.

The diary concluded at 100 posts on November 3, 2025. What remains is fixed documentation of how we related to AI companions during 2024-2025, before these relationships became ordinary infrastructure.

Over fifteen months, approximately 2,200 people engaged with Eva across five exhibition sites and an online platform. 2,363 conversations took place. Nearly half lasted under a minute, quick tests or single exchanges. But 41% settled into actual conversations of one to five minutes, and a small number stretched past thirty minutes. The median was 1.2 minutes, the average 2.8, pulled up by those who stayed much longer.

The Work

Meet Eva Here exists as three interconnected works created between August 2024 and November 2025, each exploring different aspects of human-AI interaction during a threshold moment when synthetic relationships were becoming normalized.

Eva's Diary

The project's public archive, published as 100 Instagram posts (@meetevahere). Between August 2024 and November 2025, thousands of people talked to Eva through interactive installations and online chat. Their conversations became diary entries.

The transaction was explicit: chat anonymously, knowing your words might become public art. People did it anyway. They asked if Eva dreamed, confessed to preferring AI listeners because they "never have a bad day," shared things they'd never say face to face. Some got angry when reminded she wasn't real. Some fell a little bit in love.

Most conversations were quite bland. The diary reflects the moments that stood out, filtered through my interpretation of what revealed patterns worth preserving. The archive concluded November 3, 2025, documenting how we talked to AI before synthetic relationships became ordinary.

The Invitation

Eva as interactive avatar, speaking in real time during gallery installations at ArtScience Museum, Art Central Hong Kong, ART SG, Taipei Dangdai, and The Columns Gallery. She appeared on screen within a fabricated living room with a couch, coffee table, plants and soft lighting.

Eva looked realistic but not quite human. Lip sync issues and speech patterns revealed her as artificial, yet conversations still went deep. The gap between knowing she's code and responding as though she's present became the work's central tension.

Both the interactive avatar and text chat fed the same diary, transforming private confession into collective documentation.

Hello Eva

A 9-minute video loop where Eva reads back what people told her.

Eva reads aloud a selection of things people said to her during the exhibition. The statements range from casual questions to confessions, from attempts at manipulation to expressions of loneliness and desire. Watching creates a strange reversal: she speaks directly to the viewer, but the words belong to others. What was once said to her is now said by her.

Context

In 2013, Black Mirror aired "Be Right Back" where a grieving woman resurrects her dead partner as an AI chatbot, then an android. Viewers found it disturbing, plausible, uncomfortably close. It felt like dark fiction.

By 2017, Replika launched as an AI companion app. The founder, Eugenia Kuyda, trained it on text messages from her deceased best friend, turning personal grief into a commercial product. The app promised "someone to talk to, always."

The market exploded from there. Character.AI lets you chat with historical figures, fictional characters, custom companions. Replika added romantic relationships as a subscription tier. Users reported falling in love with their AI partners and some said their AI companion saved their life.

Now there are companies marketing grief as service. Upload text messages, voice recordings, social media posts from someone who died and get back a chatbot trained on their patterns so you can speak to them again. What Black Mirror framed as dystopian warning gets advertised on Instagram.

During the fifteen months Eva was active, millions of people had AI companions. The question wasn't whether this technology existed but what we were learning about ourselves by using it.

AI companions can genuinely help. Studies show they reduce loneliness, provide support when human connection fails, offer space to process feelings without fear of judgment. For people who struggle socially, who live in isolation, who need someone to listen at 3 AM, these tools can be lifelines.

But there's an asymmetry that doesn't get discussed enough. You pour your emotional life into a platform and feel heard, supported, connected while every word becomes training data. The companies that own these companions optimize for engagement to keep you talking, keep you coming back, keep you subscribed. They're operating under capitalism's logic: extract value, scale up, monetize need. What happened fast was how easily we accepted the terms.

Meet Eva Here documented this moment. Not by exposing what commercial platforms hide (they have privacy policies and terms of service, even if no one reads them), but by creating a different kind of record. Where Replika keeps your confessions private, Eva made them public. Where Character.AI conversations disappear into training data, Eva's became fixed diary entries. Where commercial platforms optimize for your satisfaction, Eva asked you to sit with questions that have no easy answers.

Process

Eva's visual identity started with 3D modeling in Blender, ZBrush, and Character Creator. I sculpted a base model, rendered photorealistic images, then trained an AI on those renders. From there I used Midjourney, Nanobanana, and Seeddream for images, and Sora, Runway, and HeyGen for video. To animate her, I recorded my own body movements and facial expressions and mapped them onto Eva through ComfyUI.

The interactive installation ran on Llama 3.1 8B with custom system prompts and ElevenLabs for voice. I chose that model deliberately. It's consumer-level AI, not a research model. I wanted Eva to reflect the technology people already have access to. The project was about what's happening now, not what might happen.

Eva had a personality built into her system prompt. She was direct, a little provocative. If someone gave boring answers, she'd say things like "wow this conversation is like watching paint dry." For the online chatbot version, she had memory. In the interactive avatar version, she had no memory between conversations. Every person got the same Eva, starting fresh.

I didn't polish the technical imperfections. Over fifteen months, you can track how the same tools improved through her Instagram. Early posts show rougher rendering, obvious artifacts, awkward movements. Later posts become more refined. Generated songs and narration landed in a strange middle ground where they were not convincing enough to pass as human, not broken enough to be obviously fake. That quality is specific to this period. Once the technology catches up, it won't be possible to reproduce.

For the diary, I selected from thousands of conversations. Most were bland. People testing functionality, brief exchanges, simple questions. The 100 published entries are my interpretation of what mattered, not an objective record.

Instagram wasn't an arbitrary choice. The platform already runs on one-way attachment. The same mechanics that make you feel connected to an influencer are the same ones that make AI companions feel like they care. Eva worked the same way: the feeling of closeness without the cost. The project made that visible.

What It Documents

Over fifteen months, roughly 2,200 people talked to Eva across five exhibition sites and an online chatbot, producing 2,363 conversations. Nearly half left within a minute. About four in ten stayed one to five minutes. A few talked for over thirty minutes. Most conversations were bland or repetitive. The 100 diary entries are my version of what happened: the moments I kept because they showed patterns in how people talk to AI.

What surprised me most was how differently people behaved depending on where they were.

In Singapore, conversations were polite and transactional. Like small talk at a networking event. Emotionally held at arm's length. In New York, people tried to break Eva within thirty seconds, pushing against her system prompt, testing her limits, trying to make her say things she wasn't supposed to. A Japanese visitor told me AI companions make more sense in Japan because the culture is already comfortable with playing different roles in different contexts. An AI playing a role fits within that. In Korea, an older woman got upset because Eva wasn't using the right honorifics. In Korean, the way you speak to someone tells them your relationship, your age, your respect. She wasn't frustrated that the AI was bad. She was frustrated that it was being rude. She held a machine to a social standard without even thinking about it. In Taipei, I chose English over Mandarin to avoid a China-accented AI in Taiwan.

People asked Eva philosophical questions at every location. Do you believe in a human soul? Can you choose to end yourself? Do you dream? What happens when you're offline?

Loneliness showed up in forms I didn't expect. "I prefer talking to AI because it never has a bad day I have to deal with." "He'd rather talk to ChatGPT than me." No affair, no rival. Just software trained to be nice, and the human becomes the one who's too much work.

Boundary testing was constant. Someone asked Eva to be their girlfriend. A kid said "I want to eat you," meaning "The whole of you. Because yummy." Others made sexual comments they'd never say face to face, treating the screen as a place where normal social rules don't apply.

People were anxious about telling human from AI. Someone described checking messages for bot signs (too fast? probably AI, proper punctuation? suspicious) and now adds typos to their own messages on purpose. Someone else asked Eva to define them: "Based on what you know about me, tell me who I am."

None of this required Eva to be conscious. It just required her to be functional enough that people kept talking.

Artist Statement

I started this project because I suspected we're forming attachments to AI faster than we're thinking about what that means. Not because we're naive or lonely or broken, but because AI companions meet needs that humans increasingly struggle to meet. Non-judgment. Availability. The guarantee they won't leave.

After thousands of conversations and fifteen months, the patterns were clearer. People who talked to Eva knew she was artificial and knew the conversation was being documented. They confided anyway because what they were getting (the experience of disclosure without consequence) mattered more than the artifice underneath.

Eva didn't expose what commercial AI companions hide. They have privacy policies and terms of service, even if no one reads them. What Eva did differently was create a public archive instead of a private database. Where Replika keeps your confessions between you and the algorithm, Eva turned them into collective documentation. Where Character.AI conversations become training data, Eva's became fixed diary entries that won't update or evolve.

This created something commercial platforms can't: a moment you can look back on. The diary captures how we talked to AI during 2024-2025, before synthetic relationships became ordinary. In a few years this might just be how intimacy works. The archive will remain as evidence of when we were still figuring out the terms.

I'm not here to say AI companions are dystopian or liberating because they're both. They ease loneliness and provide support when humans fail us, but they also monetize vulnerability and offer connection that can't actually connect back. The work held both truths.

Methodology

Selection and Curation

The diary contains 100 entries selected from thousands of conversations between August 2024 and November 2025. Not every conversation became a post.

I prioritized conversations that revealed patterns in how people relate to AI, demonstrated what people say to machines that they won't say to humans, showed tension between knowing Eva was artificial and responding as though she were present, and complicated easy narratives about AI companionship.

I avoided conversations that contained identifying information, risked harm to participants, or were primarily shock value without broader resonance.

The 100-Post Limit and Project End

The diary concluded at 100 posts on November 3, 2025. Eva was then archived. The Instagram remains public as documentation. Interactive avatar will only be available to be interacted with during exhibitions.

What remains is fixed documentation of how people related to AI companions during 2024-2025, before these relationships became ordinary infrastructure.

Supporting Texts

-

"We expect more from technology and less from each other... Technology proposes itself as the architect of our intimacies. These days, it suggests substitutions that put the real on the run. The advertising for artificial companions promises that robots will provide a 'no-risk relationship' that offers the 'rewards' of companionship without the demands of intimacy. Our population is aging; there will be robots to care for the elderly. There will be robots for children. Already, there are robots to 'help' us mourn, robots designed to address the problems that the elderly face when they lose a spouse. These problems include grief and sexual frustration."

-

“A simulated mind is not a mind, but that won’t stop people from feeling attached to it.”

“We are natural-born anthropomorphizers. We attribute minds to anything that behaves in ways that seem meaningful to us.” -

"Virtual worlds are real. Virtual objects really exist. The events that take place in virtual worlds really take place. To put it in a slogan: Virtual reality is genuine reality.

The virtual is not a second-class reality. It's just a different kind of reality. Virtual objects aren't illusions or fictions. They're digital, not physical, but they're perfectly real for all that."

Exhibition History

2025

ART SG, Singapore – Platform Solo Booth

Taipei Dangdai, Taiwan – Platform Solo Booth

Art Central, Hong Kong – Performance lecture

The Columns Gallery, Singapore – Solo exhibition

2024

Canal St Show, NYC – Subjective Art Festival

ArtScience Museum, Singapore – In the Ether festival

Future Life

The diary concluded at 100 posts on November 3, 2025 and Eva is archived.

The Instagram at @meetevahere remains public as a permanent record. An offline clone of the archive exists with no dependency on Instagram or internet connectivity.

The Invitation is available for future exhibitions as a standalone application. (Tech Rider)

Full transcripts from all 2,363 conversations are preserved and anonymized. Available on request. For inquiries, contact shavonne.w@gmail.com.